THIS IS HOW SCALING LOOKS LIKE

The Hidden Cost of Deep Learning

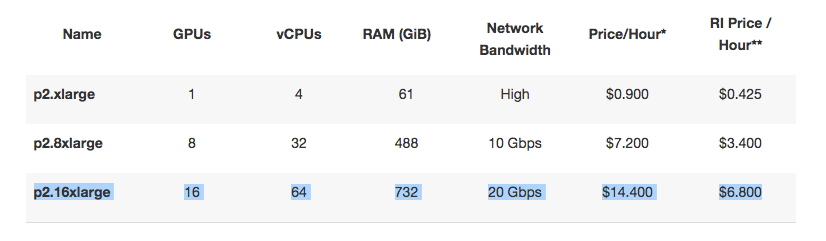

Anyone who has worked with deep learning knows that some of the models/model configurations require tremendous amounts of computing power. Given the opportunity, few will turn down the option of working with a high power cloud instance that is stacked with an array of Tesla K80s. Such as this AWS cloud instance on steroids:

We’re like kids playing computer games, with the only objective of having an advantage in the game. Take for an example LSTM, which is like the latest version of the hottest gaming franchise. Everybody wants to play it, everybody wants to be good at it, but very few are asking about the cost of it. We don’t see the cost because in most cases we’re doing our work from a cozy office and somebody else is paying the cloud bill. In case we are working from our home and use our own hardware, then the horizon in respect to costs, that we’re seeing, is limited to our credit card bill. Once that’s taken care of, we will see that the cost is settled. But it’s not that simple.

Take Rare Earths as an example. A precious mineral at the heart of the computer era. China controls close to 100% of the world’s Rare Earths supply, and it is not possible to build a single silicon based chip without having it. The most obvious cost associated with Rare Earths has to do with the environment. In the surrounding areas of China’s rare earth operations, everyone is ill. The ecology is destroyed beyond imagination. Take for example the lake in the below image, which is captured from the Baogang Steel and Rare Earth complex. Yes, that is a lake. When we sign up for a cloud instance, or buy new hardware, or run an elaborate model that takes a week to train, we rarely think about something like this. We rarely think about the cost of doing what we are doing, because it seems that it is not ourselves who are bearing the price.

The other side of the ecology aspect relates to device manufacturing. Manufacturing a laptop consumes 70% of its total energy consumption over the lifetime of the device[1]. In other words, before ever being used, it has already consumed 70% of the energy it will consume. The energy used by manufacturing is coming from energy production, which itself too requires a large amount of energy. In other words, we produce goods requiring energy, that is produced by energy factories which themselves consume significant amounts of energy and use equipment that take energy to produce.

Energy and Carbon Cost of Deep Learning

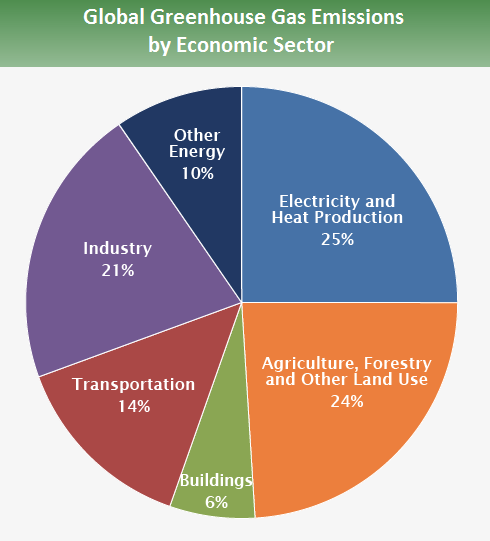

Let’s take an arbitrary number of 100 units of energy, and assume that the cost of producing it is 10 units of energy (actually it’s more). Then it means that there is a perpetual cost, where first 10 units are needed to produce the 100 units, then 1 is needed to produce the 10, and then 0.1 is needed to produce the 1…ad infinitum. The more the beast (total current production) grows, the more the tail of the beast grows together with it. While after a few hours of Google searching it does not become clear how big exactly is the share of energy production from the global energy consumption(?!), the Carbon Emission side is clear. Energy production contributes slightly over 1/3 of all Greenhouse gasses globally [2].

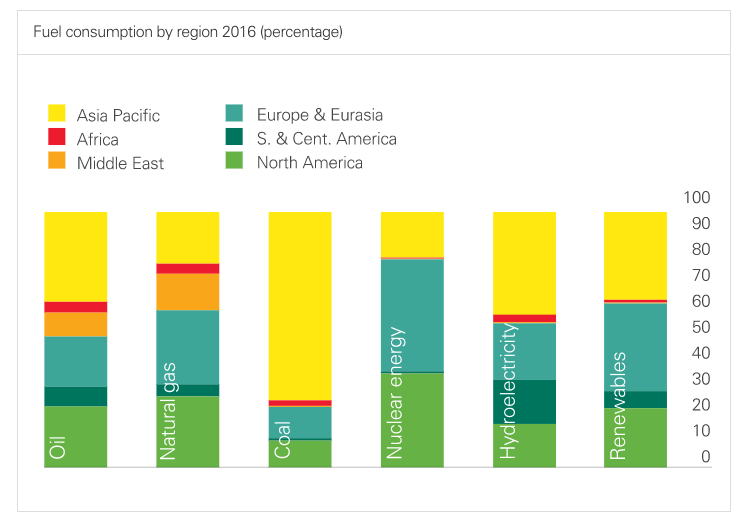

This should not be surprising, given how the global energy production is heavily relying on coal energy and other fossil fuels such as natural gas. These two being the most popular, and also the most cost-effective to produce. The situation is particularly bad in Asia Pacific where the great majority of computing components are manufactured.

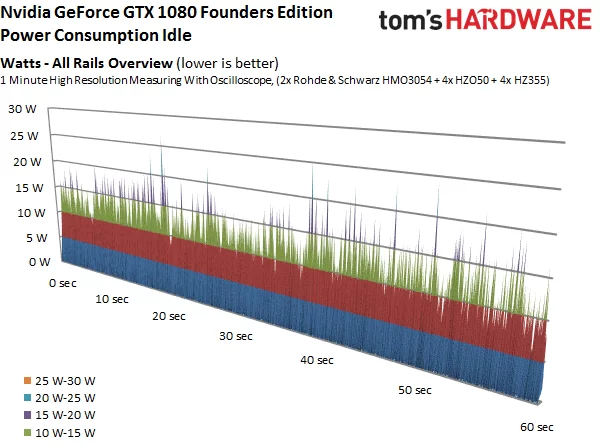

So the first consideration is with the manufacturing of devices; the energy cost of making servers, desktops, laptops and various components such as GPUs. The second consideration is related with how those devices and components consume energy when they are already in use. Use of CPU and GPU are both highly energy intensive operations, and regardless of where you are living, part of that juice is coming from coal and other dirty energy. Same is true for data centers where we get our cloud instances from. Even Norway, which on paper has 100% of its energy coming from renewable sources, ends up consuming a lot of coal due to the way it’s connected to the regional energy exchange grid. So how much does a regular consumer GPU use energy? About four iPhone’s worth when the GPU is idle. The peak energy consumption is roughly 20x higher.

Bitcoin mining, the second field after gaming, where GPUs become popular, is notoriously inefficient in terms of energy consumption. In fact, Bitcoin mining consumes more energy than many mid-size countries total energy consumption accounts for[3]. There is an interesting parallel here; once deep learning becomes widely popularized, particularly in respect to some of the more advanced models such as highly energy-intensive LSTM, an arms race takes off. One not too different from what we see in play with cryptocurrency mining today. The more there are miners (data scientists) competing for the same outcome (predictions), the more there is an incentive to develop more advanced algorithms (deep learning models), and adopt perpetually more powerful computing. As it is cryptocurrency mining already, this will come at a great expense to the global ecology. The most worrying aspect is that whereas there is a lot of talk about Bitcoin’s energy problem, there is none related to the exact same energy problem that comes with more advanced deep learning approaches. We should start talking about this now, and not when deep learning already has become the standard way for performing predictions. Most of this will be powered by coal and other fossil fuels.

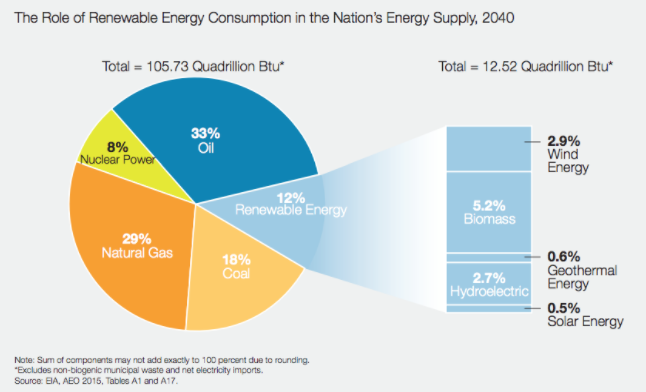

To get a clear picture of just how popular fossil fuels are in the global energy consumption, let’s look at the global aggregate. Even by 2040, just 12% of all energy production will be renewable energy, and 8% will be nuclear. The remaining 80%, will still be from fossil fuels.

In summary, the cost of deep learning to ecology comes from the way various raw materials for hardware are extracted, the energy cost of manufacturing devices, the energy cost of computing operations, and the energy cost of energy cost. All of these put a significant burden on the global ecology, and raises the important question for deep learning innovators and practitioners; how can things be done in a way that considers not just what is possible, but what is reasonable, and what is sustainable in the longer term. It is not that we should become reserved about the general idea of adopting deep learning method more widely, but that we should become more intelligent about it.

Deep Learning and Sustainability

First and foremost, there should be a clear distinction between what is today widely considered as scaling, and what is the actual meaning of scaling in the computing context. While the IT and Cloud companies would like us to think that scaling has to do with throwing more resources at the problem, it’s not. An idea rooted in the conventional meaning of scaling i.e. that something just becomes better. When we talk about “scaling” in the computing context, it has a slightly different meaning actually. This is revealed in the question “how well this system scale”. Every system will scale as much as needed in the conventional sense i.e. grow bigger, but that is not what the question implies. In the computing context scaling is related to the efficiency of your program; if we do the same thing we are doing now, but do a lot more of it, is it still feasible. Because the IT and Cloud companies have virtually infinite resources, and making those resources as highly utilized as possible is their business, they would like us to think that it’s ok to invest a lot in computing resources. Not only this mindset is dangerous in respect to ecology, but also makes democratization impossible; the less money you have, the less ability you have for adopting the deep learning method. That is the direction we’re going to at the moment.

A smart computer scientist, or a data scientist, is thinking differently and finding ways to make the model run with a minimal requirement for computing resources, without negatively affecting the output. He or she knows that throwing more boxes at a problem is not scaling. it’s the opposite of scaling (as it reduces efficiency, or at best has no effect on it, as opposed to improving it). The fact that your model runs faster just because you invest more in hardware, does not imply any efficiency gain and therefore can not be considered as scaling in the computing context.

The second point is related to the kinds of models that we use. In the examples provided by popular deep learning libraries as part of their Github repos, there are some startling examples of inefficiency. For example, using LSTM for a problem where you can get same or better result with a dense layer network. The LSTM version taking overnight to run, and the dense layer one 20 seconds. Even if do you get an increase of 1% in the prediction capability but end up using 1,000x more computing resources (and energy), one must ask if it’s worth the CO2 contribution and other negative effects that come with it. While research works focused on highlighting the energy efficiency of a single model have started to appear recently, no comprehensive comparison of common models in this context is available.

Finally, we have to be generally better at looking deeper into the effects of what we are doing. There needs to be an open discussion in the wider global deep learning community about the potential issues related to energy consumption before we go ahead and establish “best practices” that end up proving unsustainable. Researchers publishing papers, where they highlight the benefits of their latest inventions in terms of models, should also consider the energy efficiency aspect, and not just novelty or marginal gains to something that is already widely available. Last but not least, technology developers should openly discuss the potential negative effects of wider adoption of deep learning frameworks in the data science practice. Ultimately, deep learning technology solutions specifically, and machine learning solutions more generally, should be governed by similar regulations as for example cars are today.

References

[1] https://www.journals.elsevier.com/journal-of-cleaner-production/[2] https://www.epa.gov/ghgemissions/global-greenhouse-gas-emissions-data

[3] http://cryptowizzard.com/eth-mining-calculator/